Market pulse

Noisy, congested, hard to coordinate.

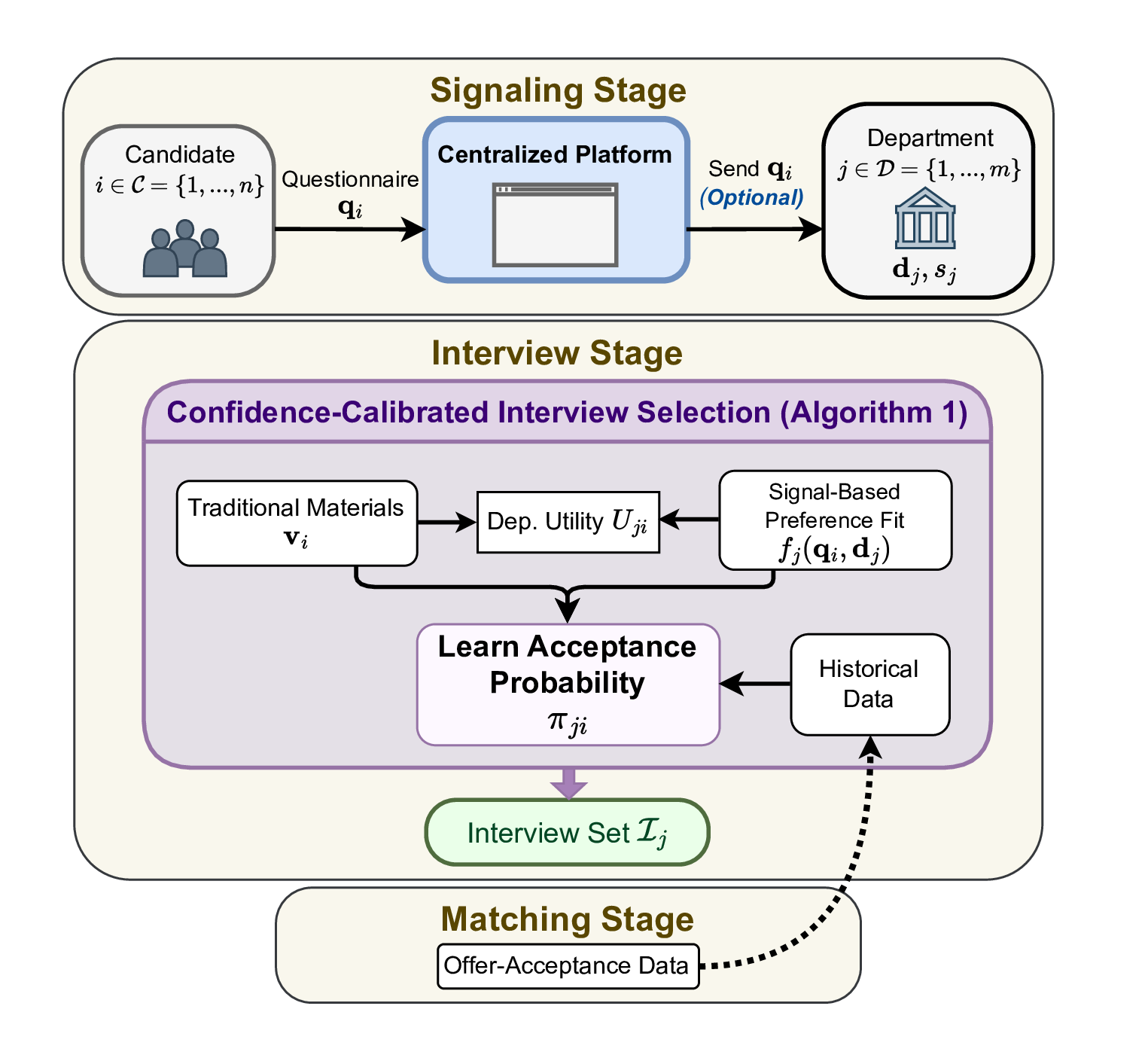

Departments cannot identify genuine interest and candidates cannot credibly signal fit. Interviews are allocated with too little information.

- Offers concentrate on a few candidates

- Interviews wasted on low-probability matches

- Ideal pairs fail to connect